When choosing a platform in the world of online betting and casinos, accessing accurate information is always a priority need. Cratosroyalbet has emerged as one of the platforms attracting attention among Turkish users in recent years. In this detailed review, objective information will be presented about the services offered by the platform, reliability status, bonus campaigns, and user experiences.

All curious topics about the platform will be addressed, providing a wide range of information from the new membership process to daily usage experiences. Everything from cratosroyalbet login procedures to deposit methods, from sports betting to casino games is covered in this guide.

Similar to how platforms in the online gaming and streaming sectors operate, understanding the features and safety measures of betting platforms is crucial for users making informed decisions.

What is CratosRoyalbet and What Does It Offer?

General Information About the Platform

Cratosroyalbet is an online betting platform that offers users various options in sports betting and casino games. The platform stands out with its user-friendly interface and wide game range. Especially thanks to its mobile-compatible structure, users can access the platform from anywhere.

Services offered by the platform include live betting options, slot games, and live casino tables. Users are recommended to conduct careful research regarding cratosroyalbet license information and legal status. Checking license documents and regulatory authorities is an important step for choosing a reliable platform.

The platform aims to provide 24/7 support service to its users with a customer satisfaction-focused approach. Research conducted about cratosroyalbet shows that the platform’s technical infrastructure has been developed using modern technologies.

CratosRoyalbet’s Prominent Features

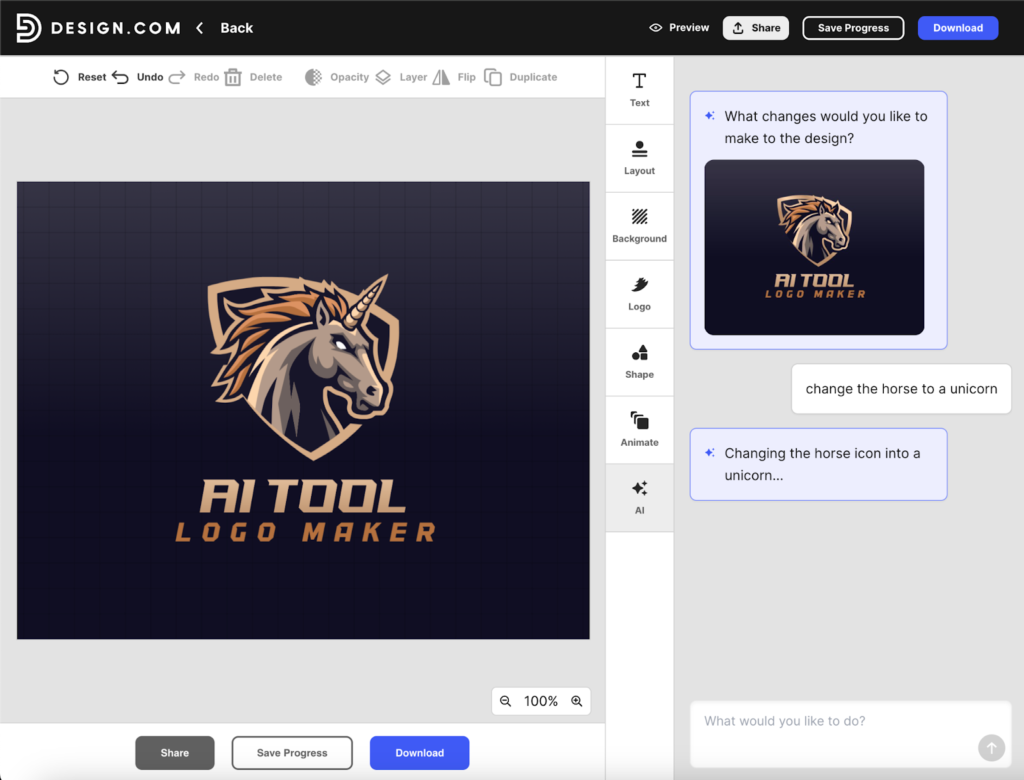

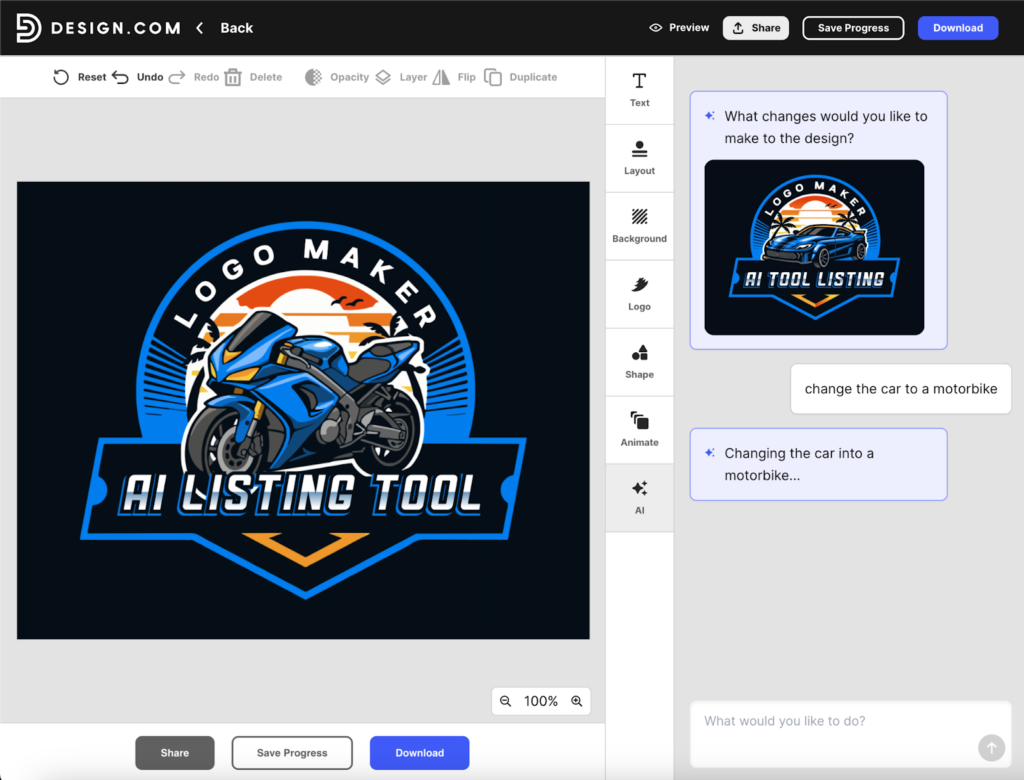

The platform offers users many different features. The user-friendly interface design comes first among these. Cratosroyalbet mobile performs quite successfully in terms of access. No performance loss is experienced during logins from mobile devices.

Regarding security measures, the platform uses SSL encryption technology. Advanced security protocols are applied to protect user data. Compliance with security standards is maintained in payment transactions as well.

Looking at game diversity, there are hundreds of different game options in the cratosroyalbet casino section. Products from popular providers are featured in the cratosroyalbet slot games category. Additionally, cratosroyalbet live casino options are also frequently preferred by users.

Regarding the customer support team, the platform offers different communication channels within the scope of cratosroyalbet customer service. Options such as cratosroyalbet live support line, cratosroyalbet whatsapp, and cratosroyalbet telegram are available.

CratosRoyalbet Login Procedures and Access Methods

How to Find the Current Login Address?

Access addresses on online betting platforms may change from time to time. To access the cratosroyalbet current login address, users are recommended to follow the platform’s official communication channels. Cratosroyalbet new login address information is generally shared through social media accounts.

Social media platforms like cratosroyalbet twitter and cratosroyalbet instagram are important channels where current address information is shared. Additionally, the cratosroyalbet telegram channel can also be used for this purpose. Regularly checking the cratosroyalbet current address information is important for uninterrupted access.

Thanks to cratosroyalbet alternative login options, users can connect to the platform when access to the main address cannot be provided. These backup addresses are prepared to ensure that the user experience is not interrupted.

To access cratosroyalbet new address information, saving important addresses in browser bookmarks is a practical method. This way, the cratosroyalbet login process can be performed more quickly.

Mobile Access and Application Options

For users who want to bet from mobile devices, the cratosroyalbet mobile version offers a very useful solution. The platform works smoothly on all devices with a responsive design approach.

Regarding the cratosroyalbet app, the platform offers a web-based solution optimized for both iOS and Android devices. Those looking for the cratosroyalbet download option can easily access the platform through mobile browsers.

The mobile login process has the same security standards as the desktop version. The cratosroyalbet login process is performed with the same user credentials on mobile devices. The cratosroyalbet sign in page provides quick access with its mobile-friendly design.

After cratosroyalbet member login, mobile users can access all desktop features. After cratosroyalbet account login is made, live betting, casino games, and payment transactions can be performed smoothly from mobile devices.

Membership Procedures: CratosRoyalbet Registration Guide

Step-by-Step Registration Process

For users who want to become members of the platform, the cratosroyalbet registration process consists of quite simple steps. To start the cratosroyalbet membership process, access to the platform’s current login address must first be provided.

The cratosroyalbet register process includes the following steps:

In the first step, click on the “Sign Up” or “Register” button located on the homepage. The cratosroyalbet new membership form opens and the necessary information is filled in. Basic information such as username, email address, phone number, and password is requested.

In the second step, personal information must be entered accurately and completely. Name, surname, date of birth, and address information are shared at this stage. The accuracy of the information provided in the cratosroyalbet sign up form is important for future withdrawal transactions.

In the third step, the platform’s terms of use and privacy policy are read and approved. Accepting these terms is necessary to complete the cratosroyalbet create account process.

In the final step, the cratosroyalbet how to register process is completed by entering the verification code sent via email or SMS. Now you can log into the account from the cratosroyalbet login page.

Membership Terms and Points to Consider

There are some basic requirements for platform membership. The most important criterion is that the user must be 18 years old. Due to legal responsibilities, membership is not given to people under this age limit.

When creating a cratosroyalbet password, it is recommended to use a strong combination. Passwords containing uppercase letters, lowercase letters, numbers, and special characters should be preferred for account security. In case of cratosroyalbet forgot my password, a password reset process can be done via email.

The information provided during membership must be compatible with identity verification documents. Since documents may be requested during withdrawal transactions, accurate information sharing is important.

A user is allowed to open only one account. In case of multiple account detection, accounts may be closed according to the platform’s terms of use.

CratosRoyalbet Bonus Campaigns and Promotions

Welcome Bonus Details

Cratosroyalbet bonus opportunities for new members offer quite attractive advantages. The cratosroyalbet welcome bonus is specifically defined for the deposit made after the first membership.

Cratosroyalbet trial bonus options create an important opportunity for users to get to know the platform. The first deposit bonus is generally given at a certain percentage rate. Cratosroyalbet deposit bonus amounts may vary depending on campaign periods.

The answer to the question of cratosroyalbet how to get bonus is quite simple. After membership is completed, the first deposit transaction is made. The bonus is automatically credited to the account or requested from customer service.

Cratosroyalbet free spin campaigns are also among the advantages offered to slot game lovers. Cratosroyalbet freespin offers are defined for use in certain slot games.

Regular Campaigns and Wagering Requirements

Cratosroyalbet campaign options are not limited to new members only. Cratosroyalbet promotion opportunities are regularly offered for existing users as well.

Deposit bonuses, loss bonuses, birthday bonuses, and special day campaigns are organized by the platform. Special reward programs are also available for VIP users.

Bonus wagering requirements may differ for each campaign. Generally, a certain multiple of the bonus amount is required to be played. Withdrawal transactions may not be possible without fulfilling wagering requirements.

Which games contribute at what rate to wagering requirements is specified in campaign details. Slot games usually contribute 100%, while some table games may contribute at a lower rate.

Bonus usage periods are also defined in campaigns. Wagering requirements must be completed within a certain time period.

CratosRoyalbet Sports Betting Section

Sports Categories and Betting Options Offered

The cratosroyalbet sports betting category offers a wide range. In addition to popular sports such as football, basketball, tennis, and volleyball, more niche sports are also included.

Cratosroyalbet football betting options constitute the most comprehensive category. Bets can be placed on matches from Turkish leagues, European leagues, and many different leagues worldwide. Cratosroyalbet basketball betting options also cover NBA, EuroLeague, and Turkish league matches.

In addition to the cratosroyalbet iddaa-style classic betting coupon system, modern betting options are also available. There are many different markets such as handicap, over-under, first half-match result.

Cratosroyalbet betting odds are determined considering the market average. Competitive odds are tried to be offered in important matches.

Live Betting Experience

The cratosroyalbet live betting platform offers the opportunity to bet while matches are ongoing. Instantly changing odds and match statistics are presented to users.

The live betting interface displays match scores, statistics, and important events in real-time. Live streaming options may also be offered to users for some matches.

Thanks to the cash-out (early payment) feature, users can close their coupons before the match ends. This feature becomes an important tool in terms of risk management.

Many different matches can be followed simultaneously in the live betting section. Easy access can be provided by marking favorite matches.

CratosRoyalbet Casino Games

Slot Games Diversity

The category most preferred by casino lovers is slot games. The platform offers a rich game portfolio by working with leading game providers.

Popular slot games like cratosroyalbet sweet bonanza are frequently played by users. There is a wide range of options from classic slot machines to video slots and progressive jackpot games.

Most slot games have a demo (trial) mode. This way, users can test games without depositing real money. Game mechanics, features, and payout tables can be learned in demo mode.

Industry leaders such as Pragmatic Play, NetEnt, and Evolution Gaming are among the popular game providers. Each provider has its own unique game themes and features.

Live Casino Tables and Other Games

Live casino options are an ideal choice for those seeking a game experience accompanied by real dealers. Different variations such as European, American, and French roulette are offered on cratosroyalbet roulette tables.

Classic blackjack, Speed Blackjack, and versions with various side bets are available on cratosroyalbet blackjack tables. Games are broadcast live accompanied by professional dealers.

Among cratosroyalbet poker options are games such as Caribbean Stud Poker and Casino Hold’em. Additionally, popular live game shows such as baccarat, Monopoly Live, and Dream Catcher are also available.

VIP tables are specially prepared for users who want to play with high limits. Although minimum bet limits are higher on these tables, special advantages are offered.

Video poker, scratch card games, and virtual sports are among the other game categories offered within the platform.

Just as streaming platforms provide entertainment for sports fans, casino platforms offer diverse gaming experiences with proper security measures in place.

Deposit and Withdrawal Transactions

Payment Methods and Limits

Many different methods are offered for cratosroyalbet deposit transactions. Bank transfer, credit card, and electronic wallet options are found in the cratosroyalbet payment methods category.

For cratosroyalbet bank transfer transactions, users can view bank account information specific to the platform from the customer panel. It is recommended to write the username or customer number in the description section when making a transfer.

E-wallet systems like cratosroyalbet papara offer fast and secure transaction advantages. Transfers made from Papara accounts are usually reflected to the account instantly.

Cratosroyalbet minimum deposit amounts may vary depending on campaigns and payment methods. Generally, the opportunity to start with low limits is offered.

Maximum deposit limits may also vary according to user levels. Higher limits are defined for VIP members.

Withdrawal Process and Important Notes

For the cratosroyalbet withdrawal transaction, the account verification process must first be completed. Documents such as identity documents and address documents may be requested.

Cratosroyalbet withdrawal limit information can be viewed in the user panel. Daily, weekly, and monthly withdrawal limits are determined. The cratosroyalbet withdrawal time depends on the payment method.

Withdrawals to e-wallet systems are generally processed faster. Transfers to bank accounts may take several business days.

Bonus wagering requirements must be completed in the withdrawal transaction. Withdrawals may not be possible from accounts with active bonuses.

Since a security check is performed during the first withdrawal transaction, the process may take a bit longer. Subsequent withdrawals are processed faster.

There may be transaction fees applied by payment providers. A minimum withdrawal amount is determined by the platform.

Customer Services and Support Systems

Communication Channels and Accessibility

The platform offers users multiple communication channels. The cratosroyalbet support team can be reached through different ways.

Among cratosroyalbet contact options, the live chat feature is the channel that offers the fastest solution. Live support accessible through the site is active during most hours of the day.

Support requests can also be made via email. This method can be preferred for more detailed problems or complaints. Generally, a response is given within 24 hours.

Communication can also be established through social media accounts. Especially these channels are useful for current login address or campaign information.

Support Quality and User Satisfaction

The customer service team’s provision of Turkish language support is an important advantage for local users. Explaining problems clearly and resolving them becomes easier.

The support team assists with issues such as technical problems, payment issues, and bonus requests. The frequently asked questions (FAQ) section also provides information on many topics.

Response times and solution quality play an important role in the platform’s user satisfaction. When cratosroyalbet reviews are examined, various feedback about customer service can be seen.

Understanding customer service excellence in online platforms helps users know what to expect from support systems across different digital services.

CratosRoyalbet Reliability Analysis

License and Legal Status

One of the most important criteria when choosing an online betting platform is reliability. The question of cratosroyalbet is it reliable is frequently researched by users.

Cratosroyalbet license information is expected to be located at the bottom of the platform or in the “About Us” section. The licensing authority and license number can be checked.

Legal regulations vary by country. There are certain restrictions on online betting in Turkey. It is important for users to know the legal situation in their own countries.

Agreements made with game providers and payment systems also give clues about the platform’s reliability. Working with recognized companies in the sector is a positive indicator.

Security Technologies and Data Protection

The platform uses SSL (Secure Socket Layer) encryption technology to protect user data. In this way, personal information and financial transactions are carried out securely.

Strong password policies are implemented for account security. Additional security measures such as two-factor authentication may be offered.

The privacy policy states that personal data will not be shared with third parties. Compliance with data protection laws is expected.

Regular security audits and system updates are important for the platform’s technical security.

User Experiences and Complaints

Independent platforms that conduct cratosroyalbet reviews share user feedback. In these reviews, positive and negative aspects are addressed objectively.

Cratosroyalbet complaint topics are generally related to withdrawal delays, bonus terms, or customer service. How the platform management approaches these complaints is important.

User forums and social media platforms are areas where real user experiences are shared. However, it should not be forgotten that this feedback may not be completely objective.

Overall user satisfaction plays a critical role in the platform’s long-term success. While positive experiences support new user acquisition, negative experiences can lead to reputation loss.

Platform Advantages and Disadvantages

Prominent Strengths

Some advantages that stand out during platform usage can be listed as follows:

Game Diversity: A wide range of options is offered from sports betting to casino games. There is content that will appeal to all types of users.

Mobile Compatibility: Access from anywhere is possible thanks to the cratosroyalbet mobile version. Responsive design works smoothly on all devices.

Bonus Campaigns: Various bonus opportunities are regularly offered for new and existing members. Welcome bonuses are at competitive rates.

Payment Options: Different payment methods provide flexibility to users. Fast transactions can be made with e-wallet systems.

Live Betting: The opportunity to bet while matches are ongoing is offered. Live streaming features enrich the user experience.

Customer Support: Support can be obtained through multiple communication channels. Turkish language support is an advantage for local users.

Areas for Improvement

As with any platform, there are some aspects that need improvement:

Withdrawal Times: Some users may complain that withdrawal transactions take longer. The process can be accelerated.

Bonus Wagering Requirements: High wagering requirements may create a disadvantage for some users.

Verification Process: The detailed identity verification process for the first withdrawal may be cumbersome for some users.

Legal Uncertainties: Clearer information can be provided about the license status and legal legislation.

Address Changes: Frequent changes in access addresses can be confusing for users.

Frequently Asked Questions

How to become a member of CratosRoyalbet?

The membership form is filled out by clicking the “Register” button on the platform’s homepage. Personal information, contact information, and account information are entered. Membership becomes active after the verification code sent via email or SMS is confirmed. The cratosroyalbet how to register process can be completed within a few minutes.

What is the minimum deposit amount?

The minimum deposit amount may vary depending on the payment method and current campaigns. Generally, the opportunity to start with low amounts is offered. The deposit page can be checked after logging into the platform for the exact amount.

How to get the welcome bonus?

The welcome bonus is credited to the account as a result of the first deposit transaction made after the first membership. In some cases, a bonus code may need to be entered. If the bonus is not automatically activated, it can be requested by contacting customer service. It should not be forgotten that wagering requirements must be met to use the bonus.

How long does the withdrawal transaction take?

The answer to the question of cratosroyalbet withdrawal time depends on the payment method. Withdrawals to e-wallet systems are generally completed within 24 hours. Transfers to bank accounts may take 1-5 business days. The first withdrawal transaction may take longer due to security checks.

Is the platform reliable?

When researching reliability, license information, user reviews, and independent reviews should be examined. The use of security technologies such as SSL encryption is a positive indicator. Personal research is recommended to answer the question of cratosroyalbet is it reliable.

Is there a mobile application?

The platform offers a web-based mobile-friendly system. Instead of downloading a separate mobile application, access to the platform can be provided through mobile browsers. All features can be used from mobile devices thanks to responsive design.

What sports betting is available?

Popular sports such as football, basketball, tennis, volleyball, handball, and ice hockey are among the betting options. Additionally, alternative sports such as e-sports, table tennis, and darts are also available. With live betting options, bets can also be placed while matches are ongoing.

What is the highest winning game on Cratosroyalbet?

Each game has a different RTP (Return to Player) rate. Generally, this rate in slot games is determined by the game provider. Games with high RTP rates theoretically provide more payback. However, casino games are based on chance and there is no guaranteed win.

Similar to how users evaluate digital platforms and tools, researching betting platforms thoroughly before committing helps make informed decisions.

Conclusion: CratosRoyalbet Evaluation

For those looking for an online betting and casino platform, cratosroyalbet stands out as one of the noteworthy options. Wide game range, various bonus campaigns, and different payment methods are among the platform’s strengths.

Thanks to mobile compatibility, users can access the platform from anywhere and experience betting or casino games without interruption. The provision of multiple communication channels regarding customer service is also a positive feature.

However, as with any platform, there are some areas that need improvement. Users are recommended to be careful about withdrawal times, bonus wagering requirements, and legal uncertainties.

When choosing a platform, it is important to consider personal preferences, budget, and gaming habits. Cratosroyalbet may be a suitable option for users who are interested in sports betting and casino games, value mobile access, and want to benefit from bonus campaigns.

Responsible gaming principles should always be a priority and not exceeding the set budget. Betting and casino games should be for entertainment purposes and should not create financial problems. Professional help is important for users who have difficulty controlling themselves.

Finally, conducting detailed research before becoming a member of any platform, reading user reviews, and carefully examining the platform’s terms are the best methods to avoid negative experiences.

For those interested in exploring various AI tools and digital platforms, understanding how different services operate and prioritize user safety is essential across all online sectors.